|

| Sean Rhea of Meraki |

Sanjit Biswas opened for the Meraki portion of Wireless Field Day 4. He told us that Meraki is still hiring and has started construction on a new office. At the time of the recording it had been only 45 days since the acquisition. Meraki is continuing to develop their product line, there are a variety of devices in the pipeline. The hardware development cycle is very long so it will be some time before there are Meraki/Cisco created APs. As a result of the acquisition, Meraki is now able to go International to Asia Pacific and other parts of Europe where they did not have a presence (previously Meraki was only in the UK and Ireland markets). Meraki is investing in adding richer features and their System Manager has been installed on about one million devices so far.

Cisco Update on the Meraki Acquisition with Sanjit Biswas from Stephen Foskett on Vimeo.

Sean Rhea took us behind the covers on Meraki's Dashboard. He has a PhD in distributed systems from UC Berkley and joined Meraki in 11/07.

Meraki (now part of Cisco) Cloud Architecture Deep Dive with Sean Rhea from Stephen Foskett on Vimeo.

Meraki customers partitioned across different servers, on different shards. This server is effectively a 1u raided server plus 1u backup (in a completely different data center/provider). One of the shards acts is the master and acts as a demultiplexer.

Thousands of Meraki devices connect to a shard.

100s of 1000s devices checking in per day

300GB of stats dating back over a year

New data is gathered from devices every 45 seconds.

If you are actively viewing the device in Dashboard it's every 1 second.

Engineering challenges are how to get access to devices behind NATs. The Meraki system mtunnel allows them to talk to their devices even if they're behind a NAT. The tunnel is fully encrypted and the information sent over the tunnel is SSL. Mtunnel is used across the entire Meraki hardware platform.

This custom secure tunnel requires only 2 packets/device/25 sec

It looks like ipsec, but is not point to point may route from a shard to a shard

Devices talk ssl over the tunnel. Each ap ships with a certificate with the shared secret on it.

The backend infrastructure is the same across switches and systems manager platforms.

Another problem is how to gather this information and minimize network overhead for Meraki & the customers and minimize the cpu costs for Meraki.

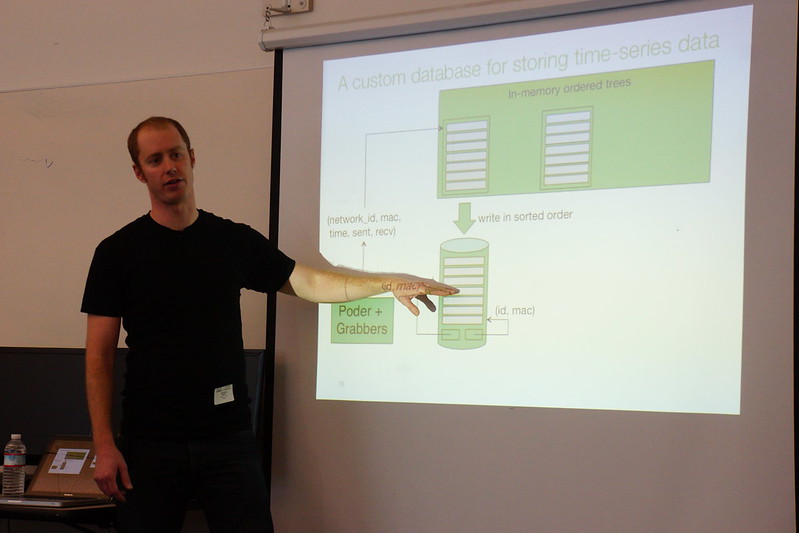

Meraki has developed a custom database tuned for statistical data. They achieve SSD-like speeds from inexpensive spinning hard disks.

Poder is a relatively new system at Meraki, first developed in 2009, then fully revampd in 2011. The idea is to gather stats from hundreds of thousands of client devices via the devices' internal web server.

The naive approach

• Overview

• Each device runs a small web server

• each shard runs one grabber daemon per statistic type (usage syslogs uptime etc)

• grabber fetches stats from devices as XML over HTTP.

Implementation

• 1 process per grabber, N+2 threads per process

• 1 thread to query DB for new devices to fetch from

• N threads to perform blocking HTTP fetches from devices

• 1 thread to insert fetch results into DB

Naive approach pros and cons

• TCP easily carries arbitrarily large responses

• Becomes expensive at scale

• A single HTTP fetch to empty webpage = 10 packets and 510 bytes

• Every grabber does its own fetch, no sharing connections

• Lots of threads for I/O parallelism = lots of context switches

• Limited to 1 fetch/node every 10 minutes.

A high-performance approach (4th gen)

• The first thing they created was an event driven RPC engine

• Non blocking IO, talks to 10k devices in 20 sec

• Uses UDP and Google protocol buffers (binary encoding format) (greatly reduces packet size)

• Stats are obtained through different modules

lldp modules (each has it's own thread)

• Database is read from by each of the different grabbers

Example: probing clients module/lldp module

A request object is passed through each of the modules, info requests are appended to request sent to the AP. Requests sent to APs often look different than switches due to the different functions that are done by different hardware. The modules are single-threaded, block on DB access and most are 200-400 lines of straight-line Scala code. There are no locking or synchronization issues to worry about.

Poder aggregates requests and responses for different modules. Overhead is a minimum of 2 packets/48 bytes for an empty response (80-90% less than previous Poder versions). Now Poder can fetch data every 45 seconds.

What to do with the data?

Where to store it and how to get it back out to display?

The storage cost of all the data that can be collected can be averaged out at 3600 clients (10 apps) (3 records per hour) 72bytes per record = 63gb per year.

Your standard OLTP databases (Postgres, MySQL)are not very good at clustering. Unless your working set fits in RAM queries take many seeks. A standard seek can take up to 18 minutes on a traditional hard drive to draw the overview dashboard graph.

With the custom database they've created (Little Table) it takes 2 seconds to draw (100mbs a second). Data can't be aggregated because aggregation discards useful information. The peaks can be averaged into data and no longer shows as severe/peaks.

Queries are written in order, writes footers with network_id and macs and are flushed every 10 minutes for durability (power outages) APs have statistics stored locally and can be queried again when the system comes back up. The files are merged and stored back to disk (in order) after 28 days (even multiple of seven to make the merge of data 'nice'). Max file size is 2GB to avoid messing with 64bit file pointers.

The Meraki data when it is on a Meraki server, it is unencrypted for speed. The data centers where their data is kept meets SAS 70 requirements. When the data is stored offsite for tertiary redundancy, the data is encrypted.

No comments:

Post a Comment